How Much Data Should You Have Before Examining an Ad Test Result?

By Brad | 0 comments November 10, 2015

Categories

Before you waste your time examining ad tests, you want to ensure that every ad has reach a minimum data threshold.

Within any sample set, it is easy to find a pattern that is caused due to chance. However, as the amount of data you have increases, the likelihood that a statistically significant result is a due to chance decreases.

For instance, if you spin a roulette wheel 4 times, there is a 1/16 chance that it will be black every time. Over the course of 100 spins, you’ll likely see 45-55 black results. Over the course of 1000 spins, you’ll probably see 475-525 black results. As the data increases, the percentage of times that a result is due to randomness decreases.

In a purely layman’s mathematical sense, confidence factors are based upon the fact that patterns in data don’t occur based upon random events. Another way of saying this is that confidence factors are based upon the assumption that the data that was gathered during a test will be similar to data that comes after the test – hence you can be ‘confident’ that the later data will be like the previous data and you can take actions with confidence in your actions.

In a PPC world, we have another factor to take into account – changes in search behavior based on timeframes.

For instance, imagine these three scenarios:

- You just got to work on a Monday morning and start to search for work related items

- It’s lunchtime on the last Monday of the month and your rent is due soon and you want to figure out finances

- You’re relaxing after dinner on a Monday and you remember something about your day and you want to search more about that item

That’s just Monday, yet your Monday evening search probably happened on a mobile phone and your conversion is going to be sending yourself a work reminder to examine the result on Tuesday morning. Your Monday morning conversion was likely to be a whitepaper download or a phone call.

Now, that’s just Monday. If you were searching for vacation cruises, your Monday search was thinking about how much you want to escape the office and that same search on a Saturday afternoon might be planning with the spouse on a cruise vacation you plan to buy.

As timeframes change, so does search behavior – this is why we need to take into account not just the data, but the timeframe of the data. You should always use a minimum of a week of data. However, it is fine to use a month or even three months of data gathering before you take action.

This is also why you should define your minimum data before running any confidence calculations.

For instance, this is a test result after 97 impressions:

|

Ad |

Impressions |

Clicks |

CTR |

Confidence |

|

Control |

40 |

1 |

2.5% |

|

|

Ad 2 |

33 |

5 |

15.15% |

97.03% |

|

Ad 3 |

24 |

0 |

0% |

15.57% |

In purely math terms, we do have a 97% confidence that ad 2 will be a winner. If this was a static data environment where the data that comes later is similar to the data that came before, we might take an action. However, search is a dynamic environment and it’s obvious that 97 impressions is not enough data (although, any online calculator will tell you it is).

Here’s the exact same test after 3163 impressions:

|

Ad |

Impressions |

Clicks |

CTR |

Confidence |

|

Control |

1023 |

23 |

2.25 |

|

|

Ad 2 |

993 |

29 |

2.92% |

82.9% |

|

Ad 3 |

1147 |

56 |

4.88% |

99.96% |

In this case, all of our ads have almost 1000 impressions, and we’re 99.96% confident in our winner (a different winner than at 97 impressions) and thus we can be confident that we can take actions on CTR based testing at this point in time.

At the low data levels, what you really want to avoid is having just one or two people significantly affect your data. For instance, if you have 100 impressions and 1 click, then you have a 1% CTR. If the next 2 people click your ad, your CTR goes from 1% to 2.91% CTR; which is a huge change and can completely affect which winning or losing ad you would have chosen.

When the data starts to grow, then you want to ensure that you have a sample size that is large enough so that a small percentage of searchers can’t significantly affect your data, which is why you want a larger and larger sample size the more impressions that an ad test generates within a given time frame.

Minimum Data Recommendations

We’re often asked to suggest minimum data amounts. There are times I’m hesitant to give out numbers because not everyone should be using the same numbers.

If you have a brand term that is searched 1 million times a week, you should be using at least a million impressions as your minimum. For many brands, they aren’t searched 1 million times in a year, and should be happy with 10,000 – 100,000 impressions before they examine their confidence levels.

These are MINIMUM DATA recommendations. It is OK to use higher numbers than these.

Minimum Data Recommendations for Most Companies:

|

Impressions |

Clicks |

Conversions |

|

|

Low Traffic |

350 |

300 |

7 |

|

Mid Traffic |

750 |

500 |

13 |

|

High Traffic |

1000 |

1000 |

20 |

|

Well-known brand terms |

100,000 |

10,000 |

100 – 1000 |

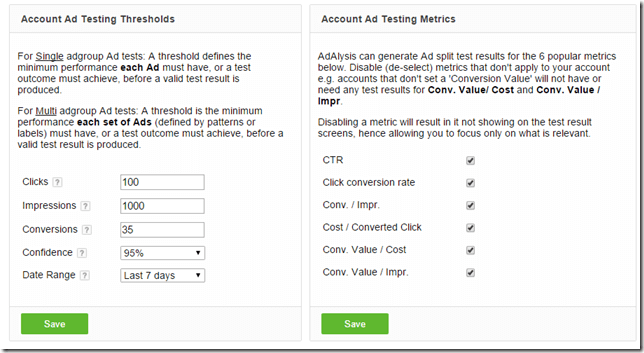

Setting Your Minimum Data in Adalysis

As your campaigns are often segmented by brand, product terms, long tail, etc – the ads within each campaign can generally use the same minimum data. We have fairly low minimums in the system as these numbers are the absolute minimum that anyone should use; but we allow you to increase your minimums for the account or for any one campaign (and each campaign can have different minimums) based upon how much minimum data you want to have before you see test results.

Note: We suggest that new users don’t change their minimum data right after opening an account. It is useful to start seeing some results and working with the data even if the numbers are low so you can get a sense of your data and results and then raise the numbers once you get a feel for the system.

In your account or campaign settings you can change your minimum data and see all the minimums across your account.

The next time you’re thinking about statistical significance or you see someone else’s test data, keep the minimum data thresholds in mind. If you see case studies with 40 impressions, the results are as likely due to randomness as a real insight, yet a test with 100,000 impressions is likely due to a real change in your ad copy.

Suggested reading: Working with Statistical Significance: How confident should you be in your test results?

Leave a comment