How to Ensure You Analyze the Correct Data, Time Periods, Timeframes, and Trends for Diagnosing and Optimizing Your PPC Account

By Brad | 0 comments July 2, 2019

Categories

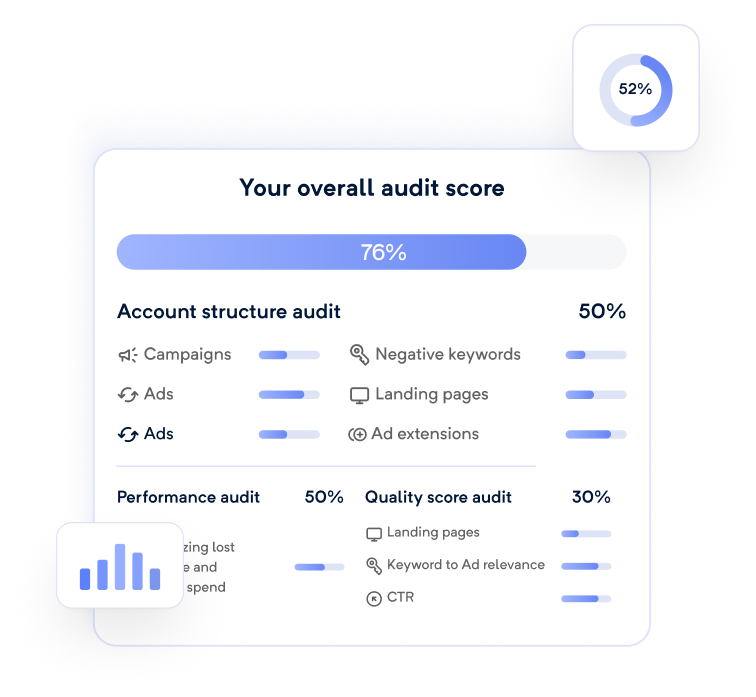

In our ongoing series about how to analyze data change for the Performance Analyzer (available as a Data Studio template or an Automated Tool for Adalysis subscribers), today we’re going to look at trending.

If you want to know what metrics made your account performance go up or down, you need to understand how metrics are related (which the report can automatically show you) and what time frames to use and what time frame you should be comparing. Should you use the previous period (such as month over month), year over year, or custom versus custom timeframes.

Choosing Your Initial Time Frame

In most PPC tools or the Google Ads and Microsoft Ads interface, you will see a list of date ranges from yesterday, last 7, last week, this month, last month, and so forth.

This is the initial timeframe you will be using in your data comparison.

Looking at today vs. yesterday can be quite sketchy as you might not know when today’s data has been updated. If you have a large amount of data and watch your trends daily, then you can do yesterday vs. previous day to see how the data changed and dig into what is causing the data changes.

Most accounts do not generate enough data that any one day can show you a trend. Generally, the last 7 days or last week is good for mid-sized accounts for comparison as you have enough data to start to see trends.

For smaller account last 14 or last 30 is a good idea.

When looking at individual campaigns or ad groups for large accounts, using last 7 to last 30 is also a good idea since you’re no longer looking at an entire account but a specific campaign or sets of campaigns.

These small time frames are for seeing exactly what is happening right now and making optimization decisions. It will not show the bigger trend pictures. If you want to see bigger trend pictures, then looking at the last quarter or last 90 days of data can show you a much bigger picture that is immune to the day to day fluctuations that occur in every account.

How Much of Your Account Should You Compare

The old analytics adage ‘averages lie, you must segment your data at least once, and often twice, to get to a real answer’ applies to data comparisons as well. Here’s how we should think about data segmentation for comparisons.

Account: If you want an entire account picture, often used in reporting, then you can do a comparison of the whole account. That will hide scenarios where one campaign had a significant decline, and another had a substantial increase in data.

The biggest downside to this report is your CTR and conversion rate data is useless. It’s easy for your display campaigns to have significant impression increases and cause your CTR to drop while you might have the same number of conversions. With Smart Display pricing, an increase in clicks might mean a decrease in CPC, so you get more clicks for the same price. This can cause your CPA to stay the same as your conversion rates decline (more clicks with the same number of conversions means that your conversion rates went down).

Network Types: Looking at all display, search, shopping, and video independently can let you see trends within a specific network. This data is much more useful than the entire account when thinking beyond reporting to making optimization decisions. However, it still suffers from some problems:

- Brand and non-brand search data are combined, hiding any trends

- Different display targeting types, such as remarketing, similar audiences, and Gmail have considerable differences in data and by combining it all together, you miss these trends.

Like Campaigns: Often, you can break your campaigns into areas like this:

- Brand Search

- Non-Brand Search

- RLSA Search

- DSA

- Remarketing

- In-Market

- Gmail

- etc.…

You probably already know how your campaign types differ and how you like to look at data. Using campaign labels, it is easy to compare similar campaigns at once.

Follow the Problem Campaign

With the performance analyzer, there are two ways you can also look at your data to see what is causing issues. The first is to click on the bullet list icon, and you’ll see a list of your campaign comparisons. Then you can even click into the problem areas to see an ad group breakdown and find the issues without looking at like campaign types. The biggest problem with this is that if one campaign went down less than another went up, the overall data might look good, and you ignore the fact there is an issue.

In the Data Studio version, on the 2nd page, you can see a heatmap of data changes by campaign to isolate where the problem areas are occurring and then do a detailed performance analyzer report for just those campaigns.

Once you decided your time frame and the campaigns you want to compare, the next step is to choose the time frame for which you will compare the data.

The Data Comparison Type

Once you’ve chosen the data you want to compare, how long of a time frame you want to compare, the last step is deciding what to compare it to for your final analysis. For most cases, the length of the time frame you are using should be the same for the initial data and the compared data. No one wants to see 7 days versus a month of data.

Let’s look at the three options for time frame comparison.

Previous Period Data Comparison

With previous period comparison, you are comparing your data to the period of time directly before the current time period. For instance, last week will be compared to the week before. The previous month will be compared to the month before.

For non-seasonal businesses, this is often the best time comparison to use as it lets you see how your changes are affecting your data right now. For a seasonal business, you might use this to make month over month decisions, but when thinking about the big picture, you’re missing a huge component of how seasonal shifts work.

For example, if this is your data (conversions going down and CPA going up), then it looks like there is a massive issue at play. If you are a non-seasonal business, you should be digging into the root cause of this issue to put some fixes in place.

If you are a seasonal business, this chart doesn’t give you a good picture of what is occurring, so let us look at year over year comparisons.

Year over Year Data Comparison

In year over year data, we’re comparing our data to the previous year. If we take the above chart where it appeared we were doing pretty poor and fill out the year over year trend; we see that year over year, we are getting more conversions than the previous year, although at a slightly higher CPA.

Custom Time Frame Comparisons

With custom time frame comparisons, we’re comparing non-traditional timeframes. There are a few times this is useful. If you are a retailer that offers the occasional sale.

Sales Comparisons

You might compare two weeks of sales data that occurred at different parts of the year to see how consumers reacted to each offer. This can give you insights into how each promotion performed and give you ideas for other sales.

Conversion Time Lag

When we’re looking at a small time frame, such as yesterday or last 7 days of data, we’re also assuming a short time lag between clicks and conversions. For example, in this account, it takes about two weeks for someone to convert. Some people do convert very quickly, and others can take a month. Also, the conversion data is added to the account via an offline upload, and there’s often a delay in uploads.

If we look at yesterday versus the previous day, we see that we only had 4 conversions and that our conversions dropped 66%.

Since we know that there is a delay in conversion tracking and that it takes people a while to convert, to see how our efforts were doing, we select a custom timeframe of 30 days starting 14 days ago as that will help accommodate the conversion delays. Then we compare that to the previous 30 days.

This gives us a much more accurate picture of 701 conversions in our 30 day timeframe if we start looking at data from 14 days ago. That is a vast difference from the 4 we saw on a given day.

Wrap Up

When digging into your metrics to compare data for reporting, optimization decisions, or just analysis, there are three main components to consider.

Time frame length: How long of a timeframe will you use in your comparison? If you choose too small of a timeframe, you might see large data swings if you are a small company that doesn’t generate much data. If you are a huge company then choosing too large of a timeframe can make you miss trends that you could have acted upon much sooner. This is why all KPI monitoring should be automated. When in doubt, the last 7 and last 30 are good numbers to use.

Data to compare: The next step is deciding what data should be analyzed. If you use your entire account, a change to search data can be overshadowed by display impressions. If you combine brand and non-brand together, a strong brand can hide issues with non-branded search. We prefer to use campaign labels to decide what types of campaigns can be bundled together for data analysis.

Time frame comparison period: Comparing to the previous timeframe is useful for non-seasonal business or seeing what recent changes in your account did to your data. Seasonal businesses should focus more on year over year data comparisons. The custom time frame is useful for conversion delays, comparing two unique timeframes, such as when two sales occurred.

By following this simple guide and choosing the correct time lengths, data, and comparison periods, you should be able to quickly compare data using the Performance Analyzer within Data Studio or Adalysis to understand how your data has changed so you can ensure that you are addressing any negative changes and learning from your positive changes.

Now that we’ve looked at how to compare data, which will help you isolate where a metric changed, in our next series of articles, we’ll start digging into what to examine when your metrics start changing. For instance, if your impressions going down, lost impression shares are increasing, you have changes to CTRs, conversions, and all the metrics included in the Performance Analyzer, what should you investigate?

This series of articles will give you the insights you need so that if you see a change in your data, you can quickly narrow down the cause and easily put a fix into place or pull insights from your data to help make your account more profitable.

Leave a comment